GPT-4o (“o” for “omni”) from OpenAI, the Gemini family of models from Google, and the Claude family of models from Anthropic are the state-of-the-art large language models (LLMs) models that are curre

GPT-4o (“o” for “omni”) from OpenAI, the Gemini family of models from Google, and the Claude family of models from Anthropic are the state-of-the-art large language models (LLMs) currently available in the Generative Artificial Intelligence space.

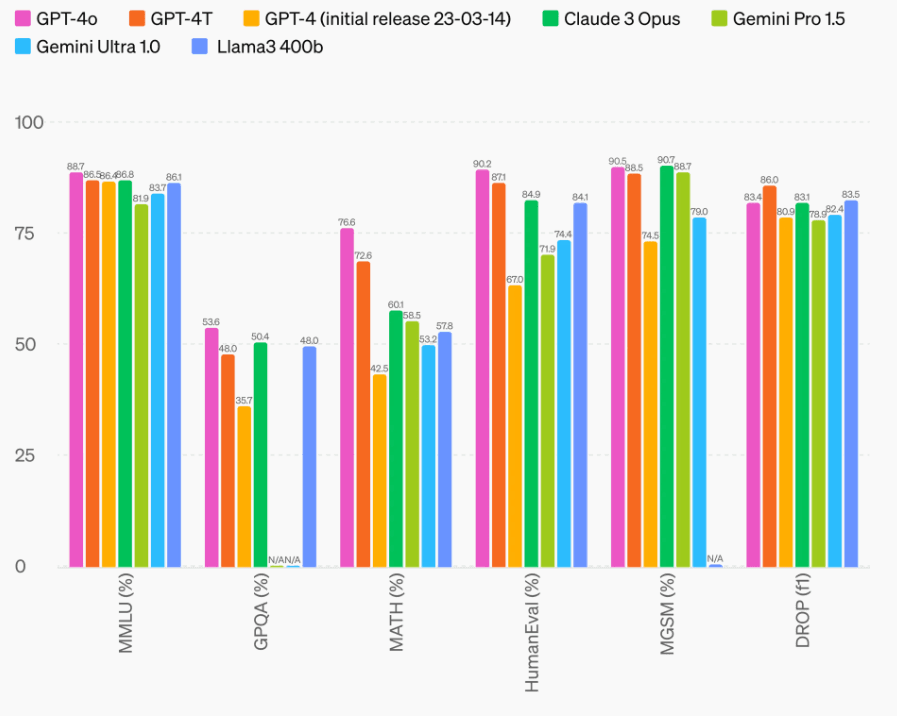

Benchmarks

In most evaluation benchmarks, OpenAI's GPT-4o has demonstrated superior performance compared to the various Gemini model variants from Google.

API Access

Both GPT-4o and Gemini model variants are available through API access and would require an API key to use the models.

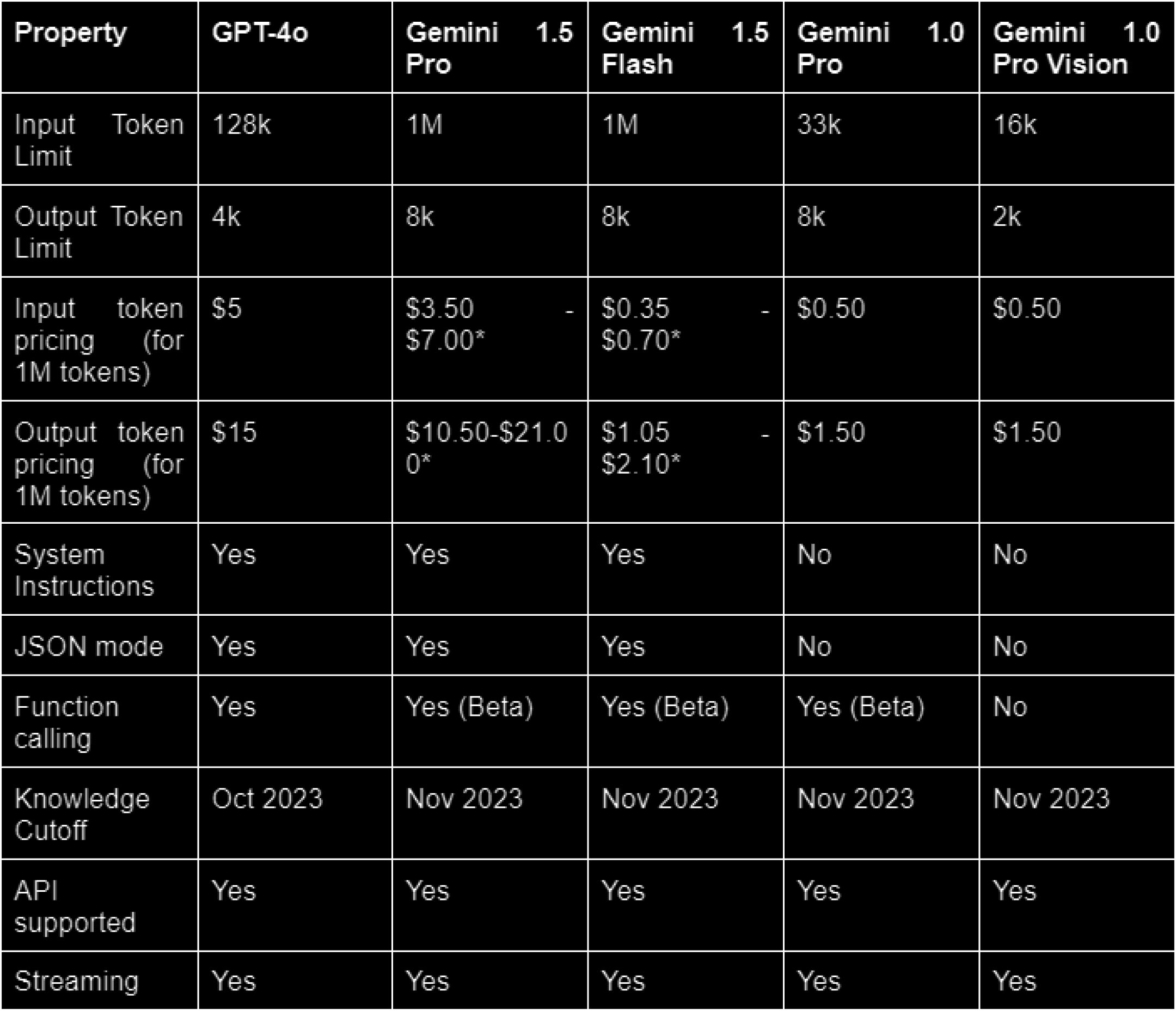

In-Depth Model Specifications

Feature Comparison

Context Caching: Google offers context caching features for the Gemini 1.5 Pro variant to reduce the cost when consecutive API usage contains repeat content.

Batch API: OpenAI offers Batch API to send asynchronous groups of requests with 50% lower costs.

Speed/Throughput: Gemini 1.5 Flash is the fastest model in terms of tokens/per second.

Nature of Responses

Gemini has been recognized for its ability to make responses sound more human. GPT-4o responses are more consistent for analytical questions.

RAG vs Gemini's 1M Long Context Window

Despite the expectation of context windows getting larger over time, RAG is still the optimal and scalable approach for a large corpus of external knowledge.

Rate Limits

OpenAI follows a tier-based rate limit approach. Google provides Gemini in Free of Charge and Pay-as-you-go modes.

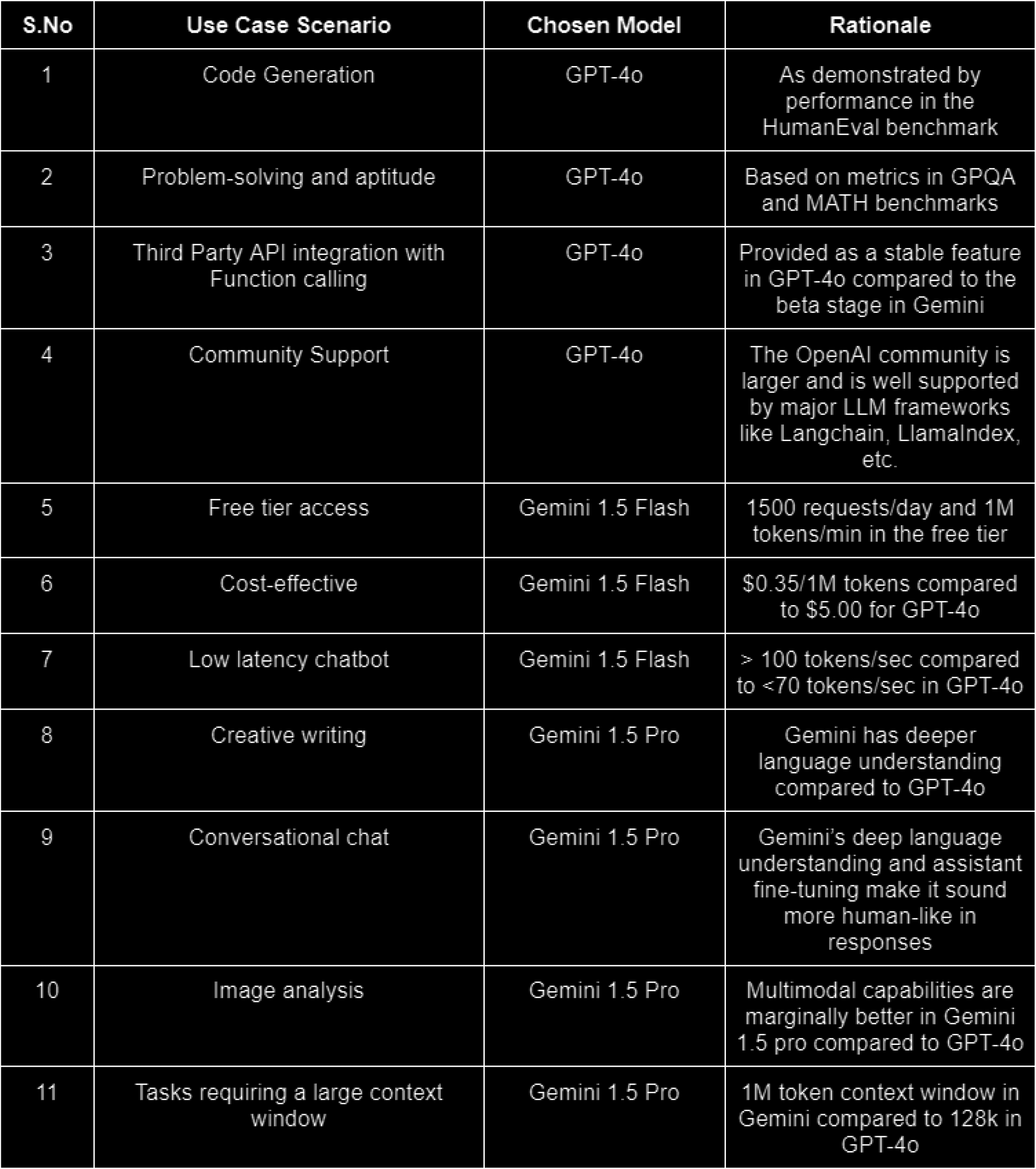

Conclusion

For problems requiring analysis, mathematical reasoning, and code generation, GPT-4o is the best option. For creative tasks, Gemini model variants are well-suited. For longer context windows, Gemini emerges as the most suitable choice with its 1M input context limit.